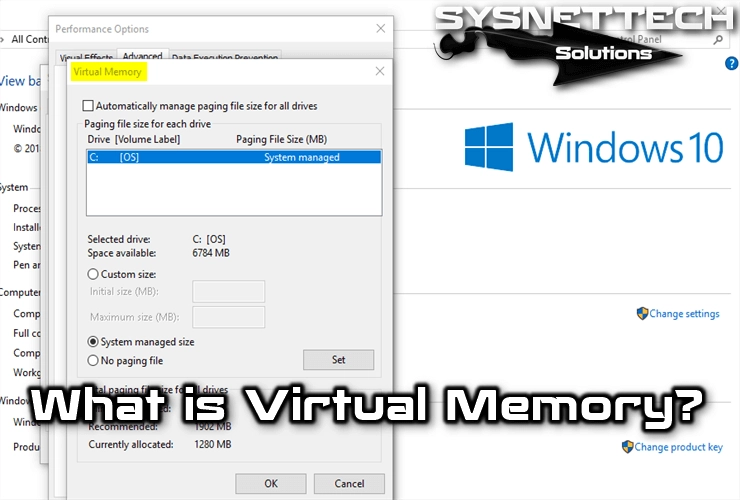

Virtual memory is a technique that allows you to run processes that do not fit neatly into RAM (physical memory). It also encourages the creation of more extensive programs based on physical sticks.

What is Virtual Memory & RAM Used in Windows Operating System?

Additionally, virtual memory helps create an abstraction scheme of RAM that separates it from the logical region that the user sees.

This makes things much easier for programmers as they don’t have to worry about memory limitations.

Virtual Memory Features

Virtual memory loads only the necessary parts of the program into a stick. The entire program is not in there.

Virtual RAM is the distinction between logical memory and physical RAM. It is usually implemented with the optional pagination method. However, it can also be implemented in a system with segmentation.

When memory is exhausted, a SWAP file is created on disk. This file functions as an auxiliary tool. Windows uses the SWAP file when it runs out of RAM. This file moves data from the hard disk to the stick.

This method creates gaps in physical memory. However, it slows down the system. However, this method simulates the same without 4GB RAM. Additionally, it runs multiple applications.

Most computers have four types of this method. These are the CPU, cache, physical stick, and hard drive area. Many applications require more information than can be held in a physical stick. This is especially true when the operating system runs multiple processes.

There is a solution to the problem of needing virtual space. Applications keep some information on disk and move it to main memory when necessary. There are several routes to do this.

One option is to decide what information to record in each segmentation. The disadvantage is design and implementation difficulties. Additionally, the interests of the two programs may conflict.

Alternatively, virtual memory is used. This method uses primary and secondary memory. This makes the computer appear to have more main ones.

So, this method is invisible to processes. The maximum amount depends on the characteristics of the processor. For example, on a 32-bit system, the maximum stick is 4GB.

As a result, virtual memory makes the application programmer’s job easier. There is no need to move data between different fields. Operating system software implements swap space. However, hardware and software are used together.

How Does Virtual Memory Work?

It is a Memory Management Unit (MMU) that translates virtual addresses into real addresses. The OS determines which program parts are stored in a physical stick. It also maintains the address translation tables that the MMU uses.

When an exception occurs, the system takes over. Finds physical space and retrieves information from disk. Then, it updates the translation tables. Finally, the program continues its execution.

In most computers, address translation tables are located in physical ones. This means that a reference to an address needs two references. To speed up performance, most CPUs include an on-chip MMU. It also uses the TLB, which holds new address translations.

This buffer does not require additional swap references. Thus, it saves time during translation. In some processors, we do this entirely through hardware. However, operating system assistance is required.

In case of an exception, the system updates the TLB. It then replays the original reference. Paging file support often also allows for RAM protection. The MMU changes how it works based on the type of reference.

This allows the OS to protect its code and data. It also prevents applications from having problems with each other.

Using Virtual Memory

The hardware translates memory addresses when the virtual stick or CPU accesses an address. Creates an actual address or indicates whether it is in the main one.

In the first case, the swap reference is typically completed. The software accesses the required location and continues working.

In the second case, the operating system handles the situation. Allows the program to continue or stop depending on the condition.

This technique simulates more swap space than available physical RAM. Therefore, programs run regardless of the size of the physical one.

Memory illusion supports fast disk storage and translation. So, the virtual address space only tracks a portion of the actual one. The rest remains on disk and is easily accessible.

Because the CPU only accesses a part of the main space, its references change. Therefore, some pieces of the virtual stick need to be moved to a prominent place. Swap space is now indispensable for modern operating systems.

Since only a few parts of a process are in memory, more processes fit. Moreover, it saves time since unused parts are not loaded and removed.

However, the operating system must manage this layout effectively. Additionally, this simplifies program execution with substitution. So, the program runs in any physical space location.

In a steady state, the main memory is full of process fragments. As a result, the CPU and OS have direct access to many processes. The OS must remove one part to load another part.

Removing a part before it is needed requires reinstallation. Excessive swapping leads to hyper-paging. Here, the CPU spends more time swapping rather than executing instructions.

To prevent this, the system predicts which parts will be needed less. This estimate is based on recent usage history. This is based on the principle of locality, where data references are grouped.

Therefore, it only requires a few process parts at a time. Verifying locality involves evaluating processing performance in a virtual stick. It suggests that space schemas might work.

Adequate space requires two components. First of all, hardware support is needed. Second, the OS must manage page or partition movements between space and secondary storage.

Once the physical address is obtained, it checks the cache. The search is successful if recently used data is found. However, if it fails, this feature accesses the main one or disk.

Paging Technique

Virtual RAM is usually implemented with paging. In paging, virtual RAM preserves the least significant bits of its address. These bits are directly used as the least significant bits of the physical stick address.

However, the most significant bits are different. These bits are used to find the remaining part of the physical address. They are also used as keys in the address translation table.

Conclusion

As a result, virtual RAM manages physical RAM resources efficiently. Additionally, it allows running large programs. Therefore, virtual space space is significant in modern computing.

Simulating RAM larger than the physical stick is essential. This is required to run multiple applications.

Both programmers and users must understand the principles and functions of virtual space. So they can understand its importance in maintaining system performance and stability.

Be the first to share your comment